During the deployment of Sim Update 13 on September 26 and Sim Update 14 on December 5, many players experienced issues with long or stuck downloads during the installation process. In both cases, we closely tracked the issue as it was occurring and escalated to the appropriate support teams as soon as the incident was confirmed. Each was resolved and normal service restored approximately six hours after the first escalation.

We realize that two major updates in a row which were each marred by deployment issues has resulted in a loss of trust from our player community. We sincerely apologize to everyone who experienced long or stuck downloads and were unable to enjoy these new Sim Updates immediately upon their release.

Whenever incidents such as these occur, our tech teams conduct a thorough review to identify the root cause. In the interest of full transparency, we would like to share some information from these reviews with you.

Please note that this article is of a technical nature and may not be easily understood by all readers. Throughout this report, we will be referring to the following technologies:

- Azure PlayFab: Microsoft’s cloud infrastructure used to power online services for thousands of PC, console, and mobile games (including Microsoft Flight Simulator).

- Content Delivery Network (CDN): a collection of servers physically located in multiple different geographic regions, each with mirrored content. The purpose of a CDN is to more evenly distribute network load across many different data centers.

September 26: Sim Update 13 Release

Sim Update 13 (SU_13) released at approximately 8:00am Pacific Daylight Time (1500 UTC) on September 26. As with every update, we had multiple employees on duty to ensure the launch went smoothly, including the Release team who were monitoring network and server data and the Community Managers who were tracking player comments on our official forums, Discord server, and other social platforms such as X, Reddit, and Facebook for any reports of trouble.

Initially, the deployment appeared to be going well, with all status metrics “in the green” immediately following the release. However, as the number of players simultaneously downloading the update increased significantly, problems started to emerge. From looking at both players’ reports about slow or stuck downloads and network monitoring data showing some of the metrics “in the red”, it became clear that there was an issue with the SU_13 launch. At this point, the Release team created an Incident Report and contacted the PlayFab team for additional support.

After analyzing the problem with PlayFab engineers, we discovered that the servers distributing Shared Access Signature (SAS) tokens to authorize access to the SU_13 content had a bug. Instead of generating a new token once every several hours, new tokens were being created every few seconds for each requested content package.

Every time a token is renewed, it creates a new entry in the CDN cache, and the CDN considers that there is new content to pull into the cache from its internal storage. This means that CDN servers all over the world were pulling the same file for every SAS token generated – multiple times every few seconds! This quickly overloaded their capacity.

This issue hadn’t been identified previously because under normal conditions the requests to the CDN were limited enough that the Azure cloud infrastructure was able to handle the load even with the bug of new tokens being created every few seconds. However, when SU_13 was released, we suddenly had a large surge of requests to download new packages.

While the CDN was not overwhelmed in and of itself, the backing Azure blob storage account that is the source of all CDN data was getting throttled. This was due to the bug in our dependency injection layer that disabled our intermediate distributed cache for the requests to get the content URLs. Our intermediate cache refresh time would trigger at 15 seconds intervals on each machine, and a new token was created instead of checking the distributed cache (Redis) if there was a new token.

To rectify the issue, PlayFab engineers made a fix to their servers such that they didn’t generate a new SAS token every few seconds for each file requested but instead only once every 15 minutes on each of their servers. On the Microsoft Flight Simulator side, our team reduced the number of downloads that could occur in parallel for each player from eight (8) to one (1) to relieve some of the pressure on the CDN. This meant that individual players would experience reduced download speeds but all requests could be processed successfully.

These measures were sufficient to resolve the issue for the SU_13 launch. Our network monitoring indicators returned to a green status, and player reports confirmed that downloads were now completing successfully. After declaring the incident resolved, the Flight Simulator team restored the number of parallel downloads back to eight.

Despite this resolution, it was known that there was still an outstanding issue because a shared cache between the servers was supposed to avoid duplication of tokens. Unfortunately, this feature had been broken in a change several months earlier and was not detected until the SU_13 deployment incident.

December 5: Sim Update 14 Release

Sim Update 14 (SU_14) launched on December 5 at approximately 8:00am Pacific Standard Time (1600 UTC). As before, we had teams actively tracking the status of the release, both in terms of network analytics data and player comments on the forums and other social networks.

As with the SU_13 launch, we identified an issue with slow and stuck downloads shortly after the release. The errors had a slightly different cause this time compared to the previous SU_13 incident. In September, the errors were caused by exceeding maximum transactions per second; this time, the errors were related to hitting the limit of max egress bytes per second. The end result was the storage refusing requests from the CDN and generating 503 (Service Unavailable) errors. Once again, we created an Incident report and escalated to the PlayFab team for additional technical support.

To resolve the issue, we implemented the following measures:

- On the Microsoft Flight Simulator side, we reduced the number of parallel downloads per client from eight (8) to two (2) at approximately 1930 UTC (3.5 hours after the launch). This had the effect of reducing the quantity of 503 errors but didn’t fix the issue for players.

- After a more detailed analysis of the errors, the PlayFab team realized that their previous fix deployed for SU_13 was insufficient. Fixes for the remaining issues (broken shared cache and SAS token generation every 15 minutes) had to be deployed to restore normal service.

- Playfab engineers deployed the final fix on their servers at approximately 2045 UTC. This new version restored the shared cache, generated only one SAS token per content and renewed an SAS token only when the previous one is about to expire (approximately once every 48 hours).

- By 2200 UTC, we saw the rate of download errors drop to only 0.1%.

- We additionally realized that the backoff system timing parameters were too long. This generated frustration for players who didn’t understand why the download could pause for 10 minutes before resuming. We subsequently changed the timing for a maximum wait of one (1) minute.

At approximately 2200 UTC on December 5, normal service was restored, the incident was declared resolved, and players began reporting that downloads were completing successfully. The team subsequently reset the number of parallel downloads per client back to eight, and all status monitoring metrics on PlayFab’s CDN were back in the green.

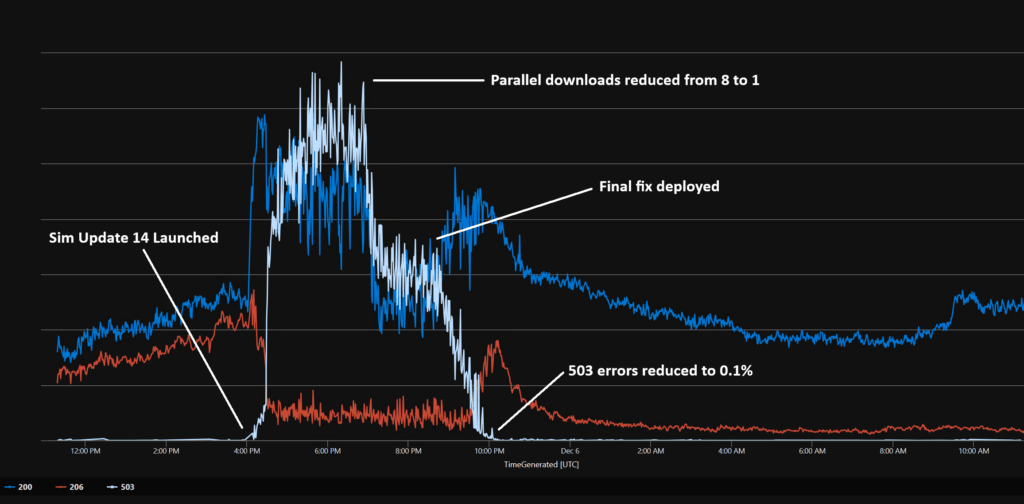

For a visualization of the release timeline, please see the graph below showing the following status codes:

- Blue: 200 (OK success status)

- Red: 206 (Partial Content success status)

- White: 503 (Service Unavailable error)

Note that immediately following the SU_14 launch, there was a large spike in 200 and 206 codes, indicating that the first players to download SU_14 were able to do so successfully. However, this was shortly followed by a surge in 503 errors, showing where the problems started.

When the attempted fix of reducing parallel downloads from 8 to 1 was implemented, you can see that the number of 503 errors was reduced by about half, but the number of successful downloads (shown by the blue and red 200 and 206 lines) didn’t have a corresponding increase. Once the final fix was deployed, 503 errors dropped to almost zero and downloads were once again completing successfully.

While it is regrettable that this issue occurred over two major releases, we are confident that a permanent fix to the SAS token generation bug is now in place which will prevent this from happening again.

We hope you have found this report about the SU_13 and SU_14 download issues and the measures the team took to resolve them interesting. Once again, we wish to offer our most sincere apologies to all players who were affected by this incident. Now that normal service has been restored, we hope everyone is enjoying Sim Update 14. Happy flying!

Sincerely,

Microsoft Flight Simulator Team